- January/February 2022

The Digital Twin Knows it All

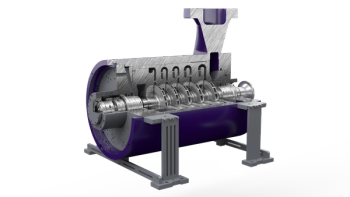

The latest buzzword in the turbomachinery industry is the digital twin. It’s being praised as the holy grail for everything from failure prevention and maintenance reduction to life extension, improved operation, and parts fore-casting. While the digital-twin concept offers defi-nite value for machinery operation, some of these claims stretch the truth. It’s worthwhile to discuss what a digital twin really is, what it can do, and where its limitations lie.

A digital twin is a computer virtual represen-tation and simulation of a real physical object or process. Although the idea has been around for a long time, the basic concept and definition were introduced in 2002 by Michael Grieves, then at the University of Michigan, as a model for Product Lifecycle Management. NASA then coined the term in 2010 in a Roadmap Report. The digital twin consists of three parts: the physical object, the digital or virtual product, and the connections between the two. The concept has branched out into different types, such as the digital-twin aggregate (DTA), where multiple digital twins operate in parallel to simulate a complex system.

Implementing a simple digital twin or a more complex DTA requires data about the real-time status of the real-life object, as well as the ability to operate, maintain, or repair the real system remotely via a parallel digital twin representation. Think of Apollo 13, where the ground-based engineers were able to determine how to rescue the mission remotely by simulating all operations and failure scenarios to identify a viable solution. Thus, “the ultimate vision for a digital twin is to be able to create, test and build [our] equipment in a virtual environment” (John Vickers, NASA, 2010).

In its purest form, the digital twin is a product and technology development tool. That means it is also an alternative to big data approaches, where correlation is assumed to be a proxy for causation. The digital twin is essentially a computer simulation program that uses real-life data to create simulations that can predict how a physical asset will perform. Artificial intelligence, self-learning, and software data analytics can also be utilized to enhance and refine the output.

But, to quote Lewis Carroll’s Humpty Dumpty in Through the Looking-Glass, “When I use a word, it means just what I choose it to mean.” So, what does “digital twin” mean in current turbomachinery discussions about condition monitoring, predictive interventions, condition-based repair and process optimization?

The distinction between a simulation model and a digital twin is not really clear. The basic components of a digital twin are a model and some real-life operational data. That’s nothing new. For decades, we have used performance models — in the form of performance curves or compressor maps, for example — to determine the health of equipment by simply comparing the expected behavior (from the curves) to measured performance data. The difference between a model and a digital twin (i.e., “a digital twin without a physical twin is a model”) does not help because the performance curves actually reflect the behavior of a real machine.

The added feature of a true digital twin versus a simple model is that a digital twin can adapt to changes of the physical object over time. For example, if a machine shows wear or has perfor-mance degradation, a true digital twin can adjust whereas a simple model simulation cannot. The digital twin thus provides added value in that it provides a more accurate representation of the current state of the machine. This can be of significant value when scheduling maintenance and repairs or predicting remaining life since the digital twin’s updated parameters directly relate to known physical failure mechanisms.

One of the key insights of digital-twin operation is that the simulation model used in the background does not have to be complicated. The use of performance curves noted above can be part of a digital twin. But updating the model to the current state of the machine using measured data is not trivial. Measured data is always subject to condition, measurement, and statistical uncertainties. The digital-twin correction using actual data (from operations, field, and factory testing) must be treated as a statistical process — the more data, the better the model improve-ment. The sourcing of test data from multiple (more or less) identical machines helps signifi-cantly when correcting the model. This means that a manufacturer with a large number of machines in operation (gas turbines, steam turbines, compressors etc.) has an advantage when building a digital twin.

The minimum requirements for the model used in a digital twin are simply that it is sufficiently physics-based, accurate, and quick to run. The concept of a digital twin, leaving all the hype aside, is based on existing and mature technologies. Overuse of the term may lead to underutilization when potential adopters become cynical of the buzzwords and shiny presentations. Using the concept requires trust in the model, the data, and the algorithms used to update the model. Supervision by human experts is still required for checking the plausi-bility of the model outcomes and digital-twin recommendations.

■Klaus Brun is the Director of R&D at Elliott Group. He is also the past Chair of the Board of Directors of the ASME International Gas Turbine Institute and the IGTI Oil & Gas applications committee.

Rainer Kurz is the Manager of Gas Compressor Engineering at Solar Turbines Incorporated in San Diego, CA. He is an ASME Fellow since 2003 and the past chair of the IGTI Oil and Gas Applications Committee.

Any views or opinions presented in this article are solely those of the authors and do not necessarily represent those of Solar Turbines Incorporated, Elliott Group, or any of their affiliates

Articles in this issue

about 4 years ago

SELECTION, PURCHASE,AND OPERATION OF TURBOMACHINERYabout 4 years ago

SENSORS FOR SPEED MEASUREMENTSabout 4 years ago

MAN ES emphasizes digital tools and decarbonizationabout 4 years ago

Sulzer Services expands offeringsabout 4 years ago

Hydrogen Compressionabout 4 years ago

CHP upgradesabout 4 years ago

Show Report: TPS 2022